The GEO Audit evaluates each URL across three independent dimensions:Documentation Index

Fetch the complete documentation index at: https://docs.shareofmodel.ai/llms.txt

Use this file to discover all available pages before exploring further.

- whether AI agents can reach the page,

- whether they can parse its structure,

- whether the content itself is easy to process.

0 to 100. Run the audit on a set of URLs, then filter by score to identify the pages that need attention first.

The three scores

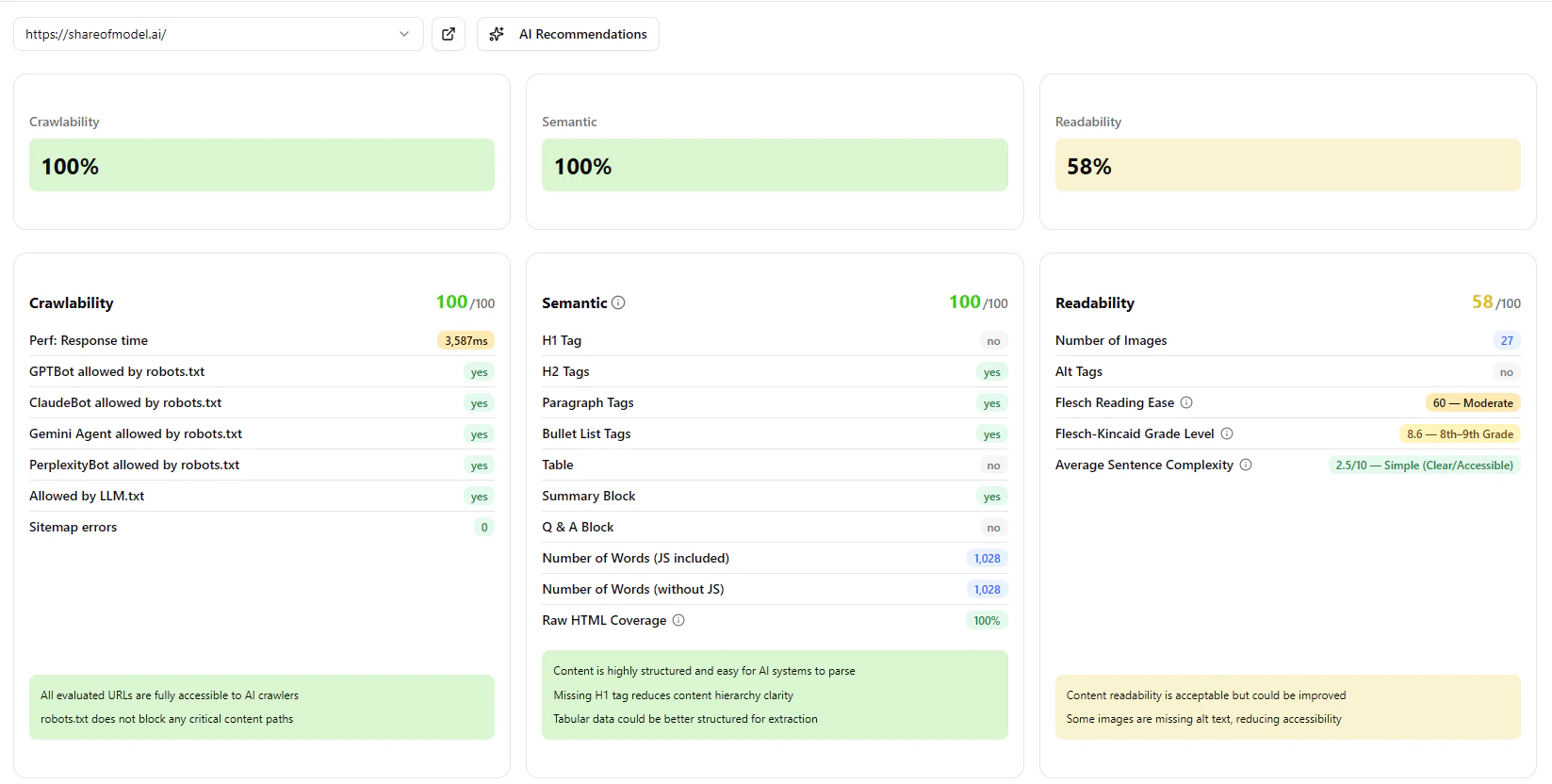

Crawlability

Measures whether AI agents are technically allowed to access the page. The audit checksrobots.txt for each major AI crawler (GPTBot, ClaudeBot, Gemini Agent, PerplexityBot and others), verifies your llm.txt configuration, and flags sitemap errors. It also records page response time, since slow responses can cause crawlers to time out.

A low score means some or all AI agents may be blocked from reading the page entirely — regardless of how well-structured the content is.

| Score | What it means |

|---|---|

| 85–100 | All major AI agents can access the page. |

| 60–84 | Some agents are restricted or the page is slow to respond. |

| 0–59 | Significant access issues — content likely invisible to multiple AI agents. |

Semantic

Measures how well the page is structured for AI parsing. AI systems don’t read pages the way humans do. They rely on HTML structure — headings, paragraphs, lists, tables — to understand what a page is about and how to extract information. A page that looks well-designed in a browser may have very little structure in its underlying HTML. The Semantic score evaluates the presence and quality of these structural elements, adjusted for the type of page being audited. A product page is expected to have a clear title and feature lists; a FAQ page is expected to have a Q&A structure. The score reflects whether the page meets those expectations. The detected page type is shown as a badge next to the score. If the detection is incorrect, you can override it manually.| Score | What it means |

|---|---|

| 85–100 | Well-structured — AI agents can parse and extract content reliably. |

| 65–84 | Acceptable structure — some improvements available. |

| 40–64 | Structural gaps that may reduce how accurately AI agents interpret the page. |

| 0–39 | Poor structure — content may be misread or ignored. |

Readability

Measures whether the content is easy for an AI system to process and summarise. The score looks at the language itself: sentence length, vocabulary complexity, reading grade level, and sentence structure. It also checks whether images have descriptive alt text — AI agents cannot interpret images without it. Content that is dense, highly technical, or relies on complex sentence constructions may be hard to summarise or cite, even if it is perfectly accessible and well-structured.| Score | What it means |

|---|---|

| 75–100 | Clear, accessible content — easy for AI to process and summarise. |

| 50–74 | Acceptable — some complexity may reduce summarisation accuracy. |

| 0–49 | Dense or complex content — AI agents may struggle to extract key information. |

AI Recommendations

Each audited URL includes an AI Recommendations panel that synthesises the three scores into a plain-English summary and a prioritised action list. The summary tells you how the page performs overall and where the most impactful opportunity lies. The recommendations below it are specific and ordered by impact — so if you can only fix one thing, you fix the right one first.Reading the results

Each URL shows all three scores side by side. You can sort and filter by any score to prioritise fixes. The panel below each score lists the specific issues found and what to do about them — most actionable first.A page can score well on Crawlability but poorly on Semantic or Readability. All three matter for AI citation: the agent needs to be able to reach the page, understand its structure, and process its content.

Common patterns

High Crawlability, low Semantic

High Crawlability, low Semantic

The page is accessible but poorly structured. Common on pages built with heavy client-side rendering, where visible content is generated by JavaScript and absent from the raw HTML. Improving server-side rendering or adding structured HTML markup typically resolves this.

High Semantic, low Readability

High Semantic, low Readability

The page has good structure but the content is difficult to process — common on legal, medical or highly technical pages. Add a plain-language summary or break long sentences into shorter ones.

Low Crawlability across all pages

Low Crawlability across all pages

Usually caused by an overly restrictive

robots.txt that blocks AI crawlers as a group. Review your bot access policy and explicitly permit the agents you want to allow.What’s next

Asset Evaluation

Score creative assets against brand attributes.

Search Visibility

Track domain and brand presence across engines.